Principal component Analysis(PCA)-Theory

In real world scenario data analysis tasks involve complex data analysis i.e. analysis for multi-dimensional data. We analyse the data and try to find out various patterns in it.

Here dimensions represents your data point x, As the dimensions of data increases, the difficulty to visualize it and perform computations on it also increases. So, how to reduce the dimensions of a data

- Remove the redundant dimension

- Only keep the most important dimension

To reduce dimensions of the data we use principle component analysis. Before we deep dive in working of PCA, lets understand some key terminology, which will use further.

Variance:

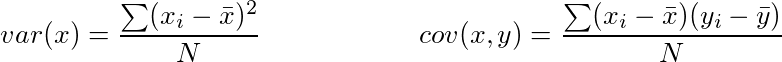

It is a measure of the variability or it simply measures how spread the data set is. Mathematically, it is the average squared deviation from the mean score. We use the following formula to compute variance var(x).

Covariance: It is a measure of the extent to which corresponding elements from two sets of ordered data move in the same direction. Formula is shown above denoted by cov(x,y) as the covariance of x and y.

Here, xi is the value of x in ith dimension. x bar and y bar denote the corresponding mean values.

One way to observe the covariance is how interrelated two data sets are.

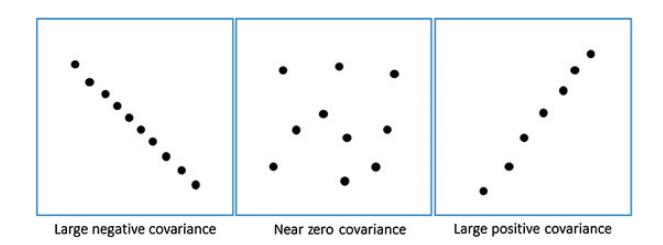

Positive, negative and zero covariance:

Positive covariance means X and Y are positively related i.e. as X increases Y also increases. Negative covariance depicts the exact opposite relation. However zero covariance means X and Y are not related.

Eigenvectors and Eigenvalues:

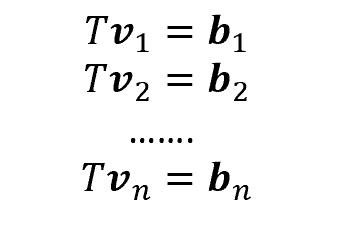

To better understand these concepts, let’s consider the following situation. We are provided with 2-dimensional vectors v1, v2, …, vn. Then, if we apply a linear transformation T (a 2×2 matrix) to our vectors, we will obtain new vectors, called b1, b2,…,bn.

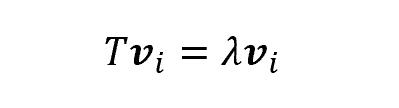

Some of them (more specifically, as many as the number of features), though, have a very interesting property: indeed, once applied the transformation T, they change length but not direction. Those vectors are called eigenvectors, and the scalar which represents the multiple of the eigenvector is called eigenvalue

Thus, each eigenvector has a correspondent eigenvalue.

When should I use PCA:

- If you want to reduce the number of variables, but aren’t able to identify variables to completely remove from consideration?

- If you want to ensure your variables are independent from each other.

- To avoid overfitting your model.

- If you are comfortable making your independent variable less interpretable.

Background:

- PCA is an unsupervised statistical technique used to examine the interrelations among a set of variables in order to identify the underlying structure of those variables.

- It is also known sometimes as a general factor analysis.

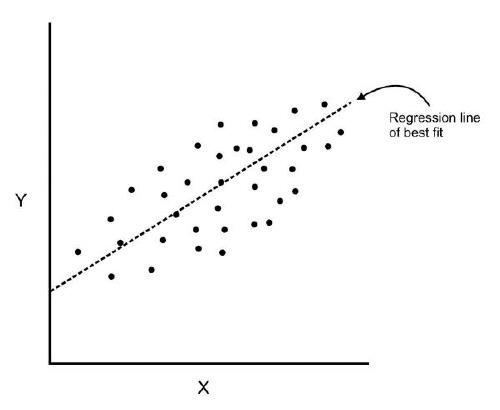

- Where regression determines a line of best fit to a data set, factor analysis determines several orthogonal lines of best fit to the data set.

- Orthogonal means “at right angles”.

- Actually the lines are perpendicular to each other in n-dimensional space.

- N-dimensional Space is the variable sample space.

- There are as many dimensions as there are variables, so in a data set with 4 variables the sample space is 4-dimensional.

- Here we have some data plotted along two features, x and y.

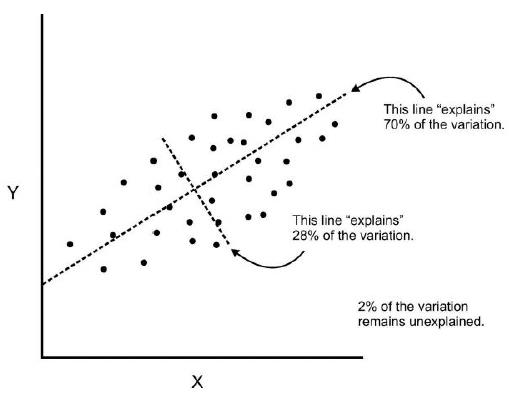

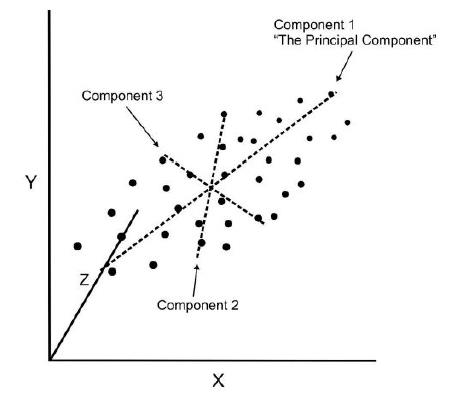

- We can add an orthogonal line. Now we can begin to understand the components!

- Components are a linear transformation that chooses a variable system for the data set such that the greatest variance of the data set comes to lie on the first axis.

- The second greatest variance on the second axis, and so on.

- This process allows us to reduce the number of variables used in an analysis.

- We can continue this analysis into higher dimensions.

- If we use this technique on a data set with a large number of variables, we can compress the amount of explained variation to just a few components.

- The most challenging part of PCA is interpreting the components.

For our work with Python, we’ll walk through an example of how to perform PCA with scikit learn. We usually want to standardize our data by some scale for PCA, so we’ll cover how to do this as well.

PCA Algorithm

- Calculate the covariance matrix X of data points.

- Calculate eigenvectors and corresponding eigenvalues.

- Sort the eigen vectors according to their eigenvalues in decreasing order.

- Choose first k eigenvectors and that will be the new k dimensions.

- Transform the original n dimensional data points into k dimensions.

Advantages of PCA

- Removes Correlated Features: In a real world scenario, this is very common that you get thousands of features in your dataset. You cannot run your algorithm on all the features as it will reduce the performance of your algorithm and it will not be easy to visualize that many features in any kind of graph. So, you MUST reduce the number of features in your dataset. You need to find out the correlation among the features (correlated variables). Finding correlation manually in thousands of features is nearly impossible, frustrating and time-consuming. PCA does this for you efficiently.

- Improves Algorithm Performance: With so many features, the performance of your algorithm will drastically degrade. PCA is a very common way to speed up your Machine Learning algorithm by getting rid of correlated variables which don’t contribute in any decision making. The training time of the algorithms reduces significantly with less number of features. So, if the input dimensions are too high, then using PCA to speed up the algorithm is a reasonable choice.

- Improves Visualization: It is very hard to visualize and understand the data in high dimensions. PCA transforms a high dimensional data to low dimensional data (2 dimension) so that it can be visualized easily. We can use 2D Scree Plot to see which Principal Components result in high variance and have more impact as compared to other Principal Components.

Disadvantages of PCA

- Independent variables become less interpretable: After implementing PCA on the dataset, your original features will turn into Principal Components. Principal Components are the linear combination of your original features. Principal Components are not as readable and interpretable as original features.

- Data standardization is must before PCA: You must standardize your data before implementing PCA, otherwise PCA will not be able to find the optimal Principal Components. For instance, if a feature set has data expressed in units of Kilograms, Light years, or Millions, the variance scale is huge in the training set. If PCA is applied on such a feature set, the resultant loadings for features with high variance will also be large. Hence, principal components will be biased towards features with high variance, leading to false results.

- Information Loss: Although Principal Components try to cover maximum variance among the features in a dataset, if we don’t select the number of Principal Components with care, it may miss some information as compared to the original list of features.